Polling vs. Long Polling vs. SSE vs. WebSockets vs. Webhooks

Whether you are chatting with a friend or playing an online game, updates show up in real time without hitting “refresh”.

Behind these seamless experiences lies a key engineering decision: how does the server notify the client (or another system) when new data is available?

The traditional HTTP was built around a simple request-response flow: the client asks, the server answers. But in real-time systems, the server often needs to push updates proactively, sometimes continuously.

That’s where communication models like Long Polling, Server-Sent Events (SSE), WebSockets, and Webhooks come in.

In this article, we’ll break down how each one works, it’s pros and cons, where it fits best, and how to choose the right approach for a production system or a system design interview.

Let's start with the most straightforward approach.

1. Polling

Polling is the simplest approach to getting updates from a server. The client sends requests to the server at regular intervals, checking if anything has changed.

Think of it like refreshing your email inbox every few minutes to check for new messages.

How It Works

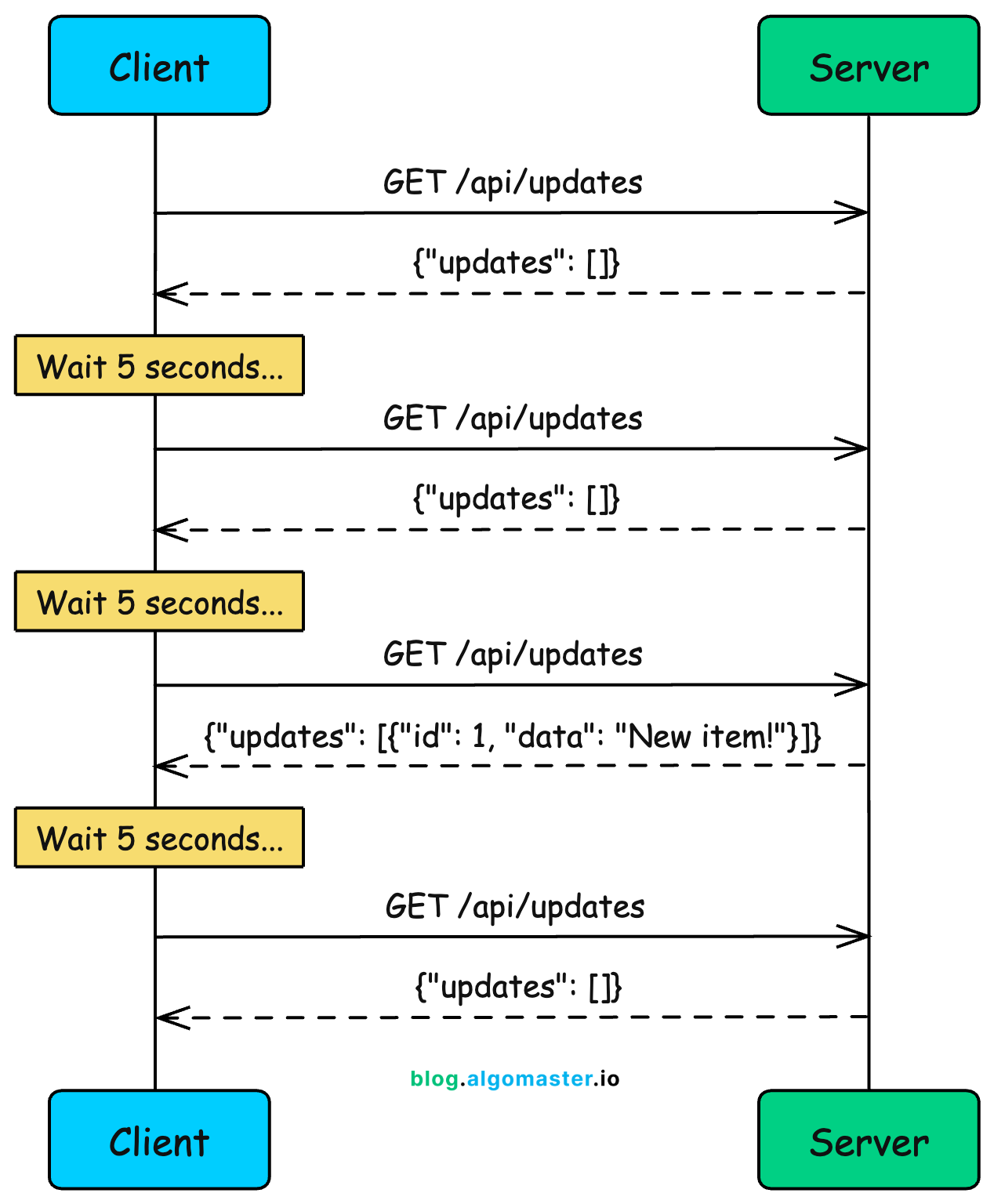

Client sends an HTTP request to the server

Server responds immediately with current data (or empty response)

Client waits for a fixed interval (e.g., 5 seconds)

Client sends another request

Repeat indefinitely

Notice something wasteful here? The client keeps asking even when nothing has changed. Three out of four requests in this diagram returned empty responses.

In real applications, this ratio is often much worse. You might make 100 requests before getting a single meaningful update.

Example: Weather Dashboard

Imagine you’re building a weather dashboard. Weather data doesn’t change that frequently, maybe every 15-30 minutes at most.

Polling makes sense here:

setInterval(async () => {

const response = await fetch('/api/weather?city=london');

const weather = await response.json();

updateDashboard(weather);

}, 60000); // Poll every minuteEvery minute, your client asks for the current weather. The server responds with temperature, humidity, conditions, and so on.

Pros

Simple to implement: Just a regular HTTP request in a loop. No special protocols or libraries needed.

Works everywhere: Any HTTP client can do polling. No firewall or proxy issues.

Stateless: Each request is independent. The server doesn’t need to maintain any connection state.

Easy to debug: Standard HTTP requests that show up in network logs and dev tools.

Cons

Wasted requests: Most requests return empty responses when nothing has changed. This wastes bandwidth and server resources.

High latency: Updates are delayed by the polling interval. If you poll every 10 seconds, updates can take up to 10 seconds to reach the client.

Doesn’t scale: 10,000 clients polling every second means 10,000 requests per second, even when nothing is happening.

Trade-off between latency and efficiency: Shorter intervals mean faster updates but more wasted requests. Longer intervals mean fewer requests but slower updates.

When to Use

Low-frequency updates: Weather data, daily reports, or anything that changes infrequently

Simple systems: MVPs, internal tools, or situations where simplicity matters more than efficiency

Legacy compatibility: When you need to support older clients or environments that can’t use modern techniques

Acceptable latency: When delays of several seconds (or minutes) are acceptable

Polling is a reasonable starting point, but you’ll quickly feel its limitations as your application grows. If you need faster updates without drowning your server in requests, that’s where long polling comes in.

2. Long Polling

Long polling improves on regular polling by having the server hold the request open until new data is available (or a timeout occurs). Instead of the client repeatedly asking “anything new?”, the server waits and responds only when there’s something to report.

This was the technique that powered early real-time applications like Facebook Messenger before WebSockets became widely supported.

How It Works

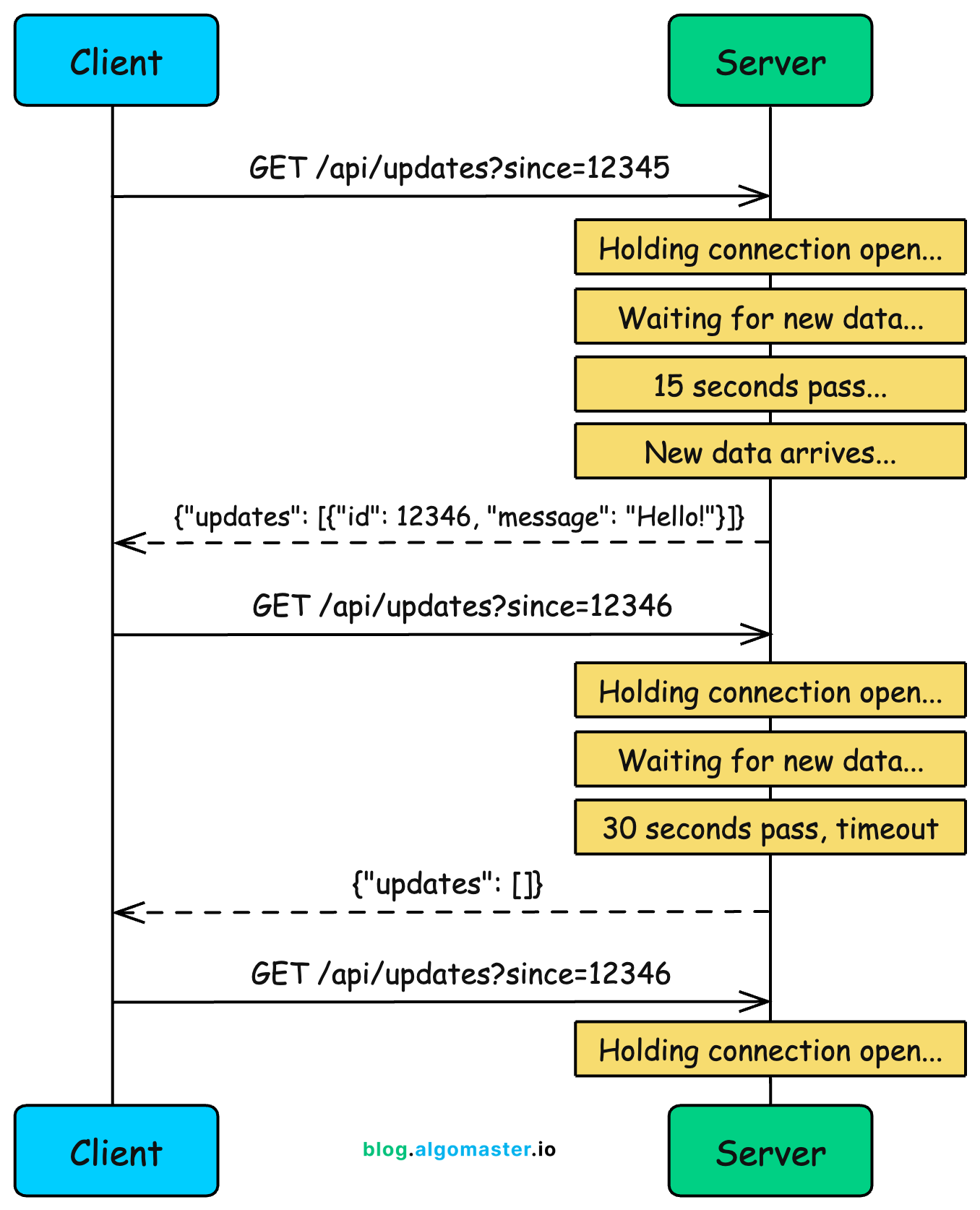

Client sends an HTTP request to the server

Server holds the connection open (doesn’t respond immediately)

When new data arrives, server sends the response

Client immediately sends another request

If no data arrives within the timeout period, server sends an empty response and client reconnects

The key insight is that the server only responds when it has something meaningful to say. This eliminates the wasted “nothing new” responses of regular polling.

Example: Chat Application

Consider a chat app built with long polling. When you open a conversation, your browser sends a request like:

GET /api/messages?conversation=123&after=msg_999The server checks if there are any messages newer than msg_999. If not, instead of returning an empty response, it holds the connection and waits.

When someone sends a new message to that conversation, the server immediately responds with the new message. Your client receives it, renders it in the chat window, and immediately opens a new connection to wait for the next message.

There’s an important detail here: the timeout. HTTP connections can’t stay open forever. Proxies, load balancers, and browsers all have limits. So the server needs to respond eventually, even if nothing happened.

A typical timeout is 30 seconds. If 30 seconds pass with no new data, the server sends an empty response, the client immediately reconnects, and the wait continues.

Pros

Near real-time: Updates arrive almost instantly when they happen, without waiting for a polling interval.

Fewer wasted requests: Responses almost always contain useful data, not empty “nothing new” responses.

Works through proxies and firewalls: Uses standard HTTP, so it works in restrictive network environments where WebSockets might be blocked.

Simpler than WebSockets: No protocol upgrade, no special handling for connection state.

Cons

Resource intensive: Each waiting client holds a connection open on the server. With 10,000 clients, you need 10,000 open connections.

Timeout handling complexity: You need to handle timeouts, reconnection logic, and edge cases like the client receiving data just as the timeout expires.

Head-of-line blocking: If multiple events happen quickly, they may get batched together or delivered out of order.

HTTP overhead: Every response requires a new request, and each request carries HTTP headers. This overhead adds up.

When to Use

Chat applications (historically): Before WebSocket support was universal, long polling powered most chat systems

Fallback mechanism: When WebSockets aren’t available due to proxy or firewall restrictions

Server-initiated updates: When clients mostly receive data rather than send it

Moderate scale: Works well for hundreds or thousands of concurrent connections, but gets expensive at massive scale

Long polling feels like “almost real-time,” but it’s still request-response at heart. The client still initiates every exchange. What if the server could just push data to clients whenever it wants? That’s exactly what Server-Sent Events enable.